Training large language models (LLMs) has posed a significant challenge due to their memory-intensive nature. The conventional approach of reducing memory consumption by compressing model weights often leads to performance degradation. However, a novel method, Gradient Low-Rank Projection (GaLore), by researchers from the California Institute of Technology, Meta AI, University of Texas at Austin, and Carnegie Mellon University, offers a fresh perspective. GaLore focuses on the gradients rather than the model weights, a unique approach that promises to enhance memory efficiency without compromising model performance.

This approach diverges from the traditional methods by focusing on the gradients rather than the model weights. By projecting gradients into a lower-dimensional space, GaLore allows for fully exploring the parameter space, effectively balancing memory efficiency with the model’s performance. This technique has shown promise in maintaining or surpassing the performance of full-rank training methods, particularly during the pre-training and fine-tuning phases of LLM development.

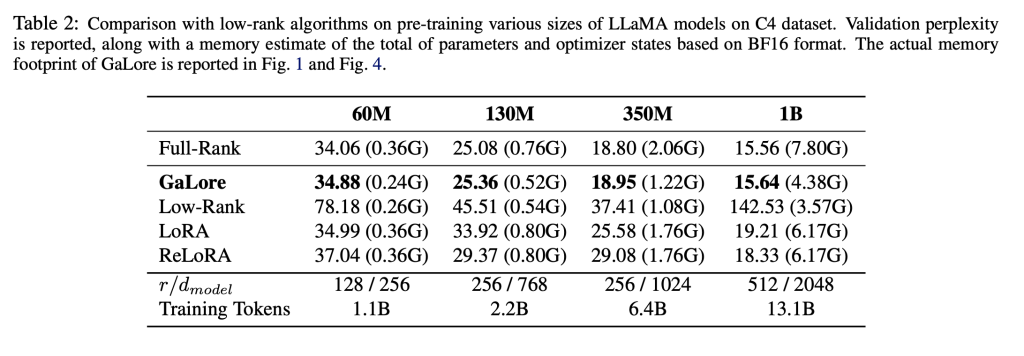

GaLore’s core innovation lies in its unique handling of the gradient projection, reducing memory usage in optimizer states by up to 65.5% without sacrificing training efficiency. This is achieved by incorporating a compact representation of gradients, which maintains the integrity of the training dynamics and enables substantial reductions in memory consumption. Consequently, GaLore facilitates the training of models with billions of parameters on standard consumer-grade GPUs, which was previously only feasible with complex model parallelism or extensive computational resources.

The efficacy of GaLore extends to its adaptability with various optimization algorithms, making it an integral addition to existing training pipelines. Its application in pre-training and fine-tuning scenarios across different benchmarks has demonstrated GaLore’s capability to deliver competitive results with significantly lower memory requirements. For instance, GaLore has enabled the pre-training of models with up to 7 billion parameters on consumer GPUs, a milestone in LLM training that underscores the method’s potential to transform the landscape of model development.

Comprehensive evaluations of GaLore have highlighted its superior performance to other low-rank adaptation methods. GaLore conserves memory and achieves comparable or better outcomes when applied to large-scale language models, underscoring its effectiveness as a training strategy. This performance is particularly evident in pre-training and fine-tuning on established NLP benchmarks, where GaLore’s memory-efficient approach does not compromise the quality of results.

GaLore presents a significant breakthrough in LLM training, offering a powerful solution to the longstanding challenge of memory-intensive model development. Through its innovative gradient projection technique, GaLore demonstrates exceptional memory efficiency while preserving and, in some cases, enhancing model performance. Its compatibility with various optimization algorithms further solidifies its position as a versatile and impactful tool for researchers and practitioners. The advent of GaLore marks a pivotal moment in the democratization of LLM training, potentially accelerating advancements in natural language processing and related domains.

In conclusion, key takeaways from the research include:

GaLore significantly reduces memory usage in training large language models without compromising performance.

It utilizes a novel gradient projection method to explore the parameter space fully, thus enhancing training efficiency.

GaLore is adaptable with various optimization algorithms, seamlessly integrating into existing model training workflows.

Comprehensive evaluations have confirmed GaLore’s capability to deliver competitive results across pre-training and fine-tuning benchmarks, demonstrating its potential to revolutionize the training of LLMs.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and Google News. Join our 38k+ ML SubReddit, 41k+ Facebook Community, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our Telegram Channel

You may also like our FREE AI Courses….

![]()

Hello, My name is Adnan Hassan. I am a consulting intern at Marktechpost and soon to be a management trainee at American Express. I am currently pursuing a dual degree at the Indian Institute of Technology, Kharagpur. I am passionate about technology and want to create new products that make a difference.